Creating a virtual replica

Did you know?

📈 What are digital twins and who uses them?

- Digital twins use real-time data from sensors to monitor a physical object

- Engineers at organisations such as Ocado, Rolls-Royce, NASA, and Ford use them

- They can reduce maintenance costs, improve efficiency and predict future performance

- Digital twins of humans could be created for use in areas such as healthcare and retail

"If you can’t measure it, you can’t hope to control it” is one of the truisms of science and engineering and is also known as “the one Kelvin got right”. It was one of eminent Victorian physicist William Thomson’s, also known as Lord Kelvin, favourite sayings and is fortunately more accurate than his confident declarations that heavier-than-air flight was impossible and that X-rays were a hoax. It’s also the axiom at the heart of one of the most important technologies currently being implemented in engineering: the digital twin.

The concept of a digital twin has been around for about a decade, but it’s only in the past five years that large-scale implementation has begun to happen. An essential component of the developments known as Industry 4.0 or the ‘fourth Industrial Revolution’, digital twins make the smart connected sensors that are ubiquitous in modern engineering visible – capturing their data and putting it onto a display for process operators to see in real time. This allows them, and by extension the entire enterprise, to observe how their facility is operating, and the influence that the conditions or settings of machinery are having on the output of the plant or physical asset.

it uses real data from operating machinery and live processes to show what is happening

There is still some confusion over the terminology of digital twins. It is not uncommon to see a simulation intended for use in the design of a factory or plant described as a digital twin, but Mark Girolami, Sir Kirby Lang Professor of Civil Engineering at the University of Cambridge and leader of the programme for Data-Centric Engineering at the Alan Turing Institute, explains that a true digital twin is not a simulation but a representation. “The most important thing is that it uses real data from operating machinery and live processes to show what is happening,” he says.

How virtual replicas help to monitor jet engines

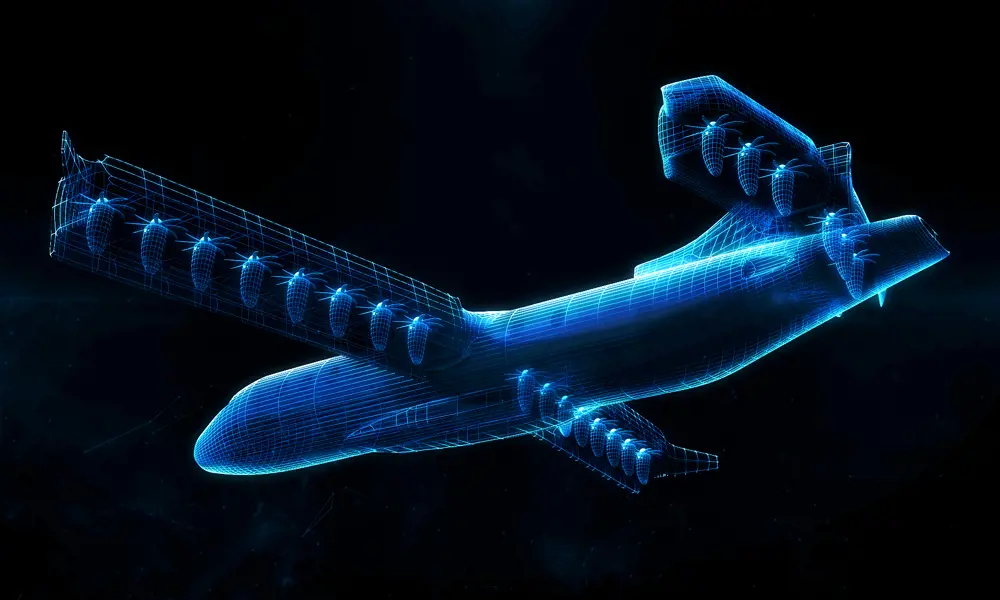

Rolls-Royce pioneered one of the earliest and most striking uses of digital twins. The aerospace giant combines the multitude of sensors embedded in its jet engines with machine learning and software analytics to create a virtual replica of the engine in operation, which continually updates itself. It takes in not only the conditions inside the engine but also the ambient environment affecting its operation.

By measuring the engine’s condition and ambient environment around it, Rolls-Royce’s digital twin enables engineers at the company’s operational headquarters in Derby to monitor the condition of an engine in flight, for example between London and Jakarta, with the data relayed back by satellite link, and observe any fluctuations in engine performance that might indicate a component in need of repair or replacement © Rolls-Royce

The machine learning and analytics functions help relate the sensor readings to the component-by-component operation of the engine and identify what is likely to be the cause of performance fluctuation. This allows the company to schedule maintenance of engines before they fail and cause aircraft to be taken out of service, damaging their operators’ income. This, in turn, helps Rolls-Royce’s business model of selling aircraft operators time in the air, or as the company expresses it ‘power by the hour’, rather than merely selling them engines. Rolls-Royce calls this strategy the ‘intelligent engine’.

It’s the machine learning and analytics aspects of the digital twin that have delayed its implementation. Both depend heavily on computing speed and power, which has only really developed in the past few years. While it would have been possible at any time to merely display sensor outputs remotely – and indeed this has been standard operating procedure in process plants for many years – the ability to relate these readings to the operation of the asset and use this to predict how output might change is key to the advantages of operating a digital twin.

Using digital twins for infrastructure inspection

Rolls-Royce’s use of digital twins inspired a much more down-to-earth application of the technology. Professor Lord Robert Mair CBE FREng FRS, Head of the Centre on Smart Infrastructure and Construction (CSIC) at the University of Cambridge, explains: “we wondered if we could get the same sort of results from implementing that technology on civil engineering assets, particularly railway bridges.” Static, non-mechanical structures are obviously very different from highly complex jet engines, but in the sense that they need regular maintenance and cause huge disruption if they fail, they share important features.

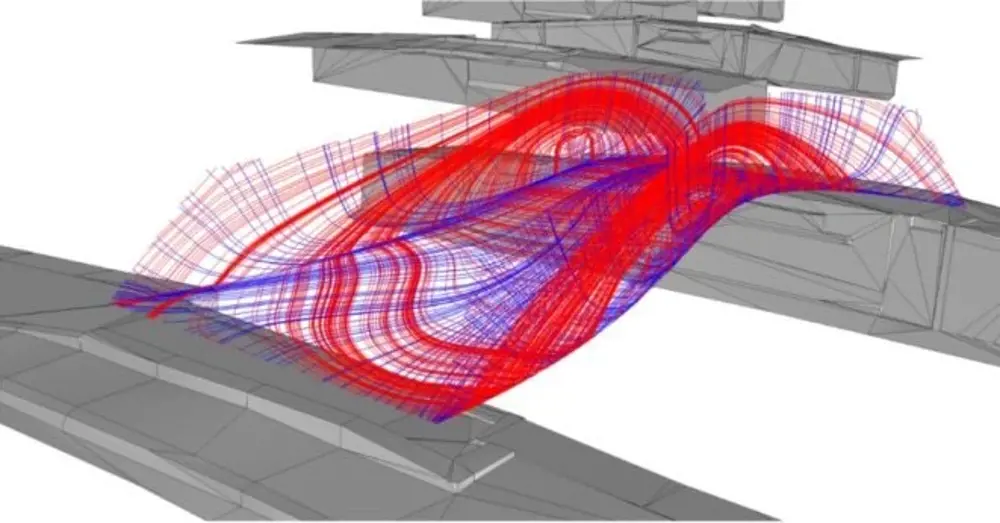

CSIC put this into practice on two railway bridges in Staffordshire. Traditionally, asset management of railway bridges depends on periodic visual inspection to detect components or structures that may be about to fail. To replace this system, Professor Mair’s team installed fibre-optic sensors in a prestressed concrete girder bridge and a steel composite girder bridge, using the data from these to construct digital twins of both bridges. The steel bridge had 108 fibre Bragg grating (FBG) strain sensors on both the main steel I-beams and the transverse I-beams. “The sensors can measure strain and temperature at discrete points along an optical fibre,” Girolami explains. “Fibre-optic sensors are lightweight, require a minimal number of wiring cables and provide stable, long-term measurements. When laser light is shone down a fibre-optic cable, the gratings act like dielectric mirrors reflecting only those wavelengths that match the Bragg wavelength. When the fibre optic cable is elongated (or shortened), the wavelength of the reflected light shifts in proportion to this change in strain.” The strain is calculated by taking account of the thermal expansion of the glass.

The Alan Turing Institute’s programme in Data-Centric Engineering has been developing a ‘digital twin’ of the 3D-printed bridge in Amsterdam to help analyse the sensor network data, as well as conducting extensive tests of the physical printed material and using statistical methodology to understand more about the material itself © Alan Turing Institute

Trains pass over the bridge at up to 160mph in both directions. “Every time a train goes over one of these bridges, the sensors register how the structure reacts to all those moving forces and give us huge amounts of data,” Professor Mair says. “We can then use the digital twin to tell us whether the structure can stand up to those forces by relating them to the design tolerances of those two different types of girder. One thing that has been vital to this is a shift to manufacturing those structures under controlled conditions in factories and transporting them to the site to be assembled, rather than casting concrete on site.”

The Alan Turing Institute is also involved in the project, analysing up to 12GB of data per day and relaying this analysis to structural engineers at Cambridge. One of the complicating factors, Professor Mair says, is that the digital twin does not display the raw data from the sensors, but rather derived quantities such as stress and strain. “Establishing the live link between the asset and the twin and ensuring that what the engineers see is directly meaningful is a non-trivial task, and one where we have depended strongly on the Turing Institute team,” he adds.

Although the Staffordshire projects have established twins on new assets, Professor Mair points out such capability can also be retrofitted onto existing structures. “One of the important features of the railway network in Britain is that so much of it is Victorian,” he notes. “In Staffordshire, we had the advantage of dealing with brand-new structures, but that’s very much the exception rather than the rule.” In Leeds, he adds, the CSIC was involved with a project to create a digital twin of a Victorian railway viaduct that carried the mainline through the city and into its main station.

For a relatively low investment, we showed that there was no need to spend millions of pounds

“It’s a very large structure, it’s right in the middle of the city and it looked like it was in bad repair, with very large visible cracks in the brickwork. There was a concern that it would have to be entirely replaced, which would have been hugely expensive and disrupting over a very long period of time. But we retrofitted the same type of fibre-optic sensor that we had used in the new structures in Staffordshire onto this Victorian brickwork, created a digital twin and established that, despite its looks, the viaduct was in fact performing perfectly well and would do for years to come. For a relatively low investment, we showed that there was no need to spend millions of pounds.”

Ocado’s digital twins restore order

🤖 Answering ‘what if’ questions with digital twins and using automated robots

As previously reported in Ingenia, online grocer Ocado uses digital twin technology at two of its automated warehouses. “Digital twins can help us answer ‘what if?’ questions about the future such as the potential impact of climate change, disruptive changes to systems such as the impact of local energy generation and electric vehicles on the national grid, the impact of new policies or regulatory frameworks and so on,” explains Paul Clarke, Ocado CTO, in a post on the blog Data-Centric Engineering.

The automated warehouses use robots to pack customer orders into carrier bags placed in plastic crates known as totes. Identifying barcodes on the totes are scanned at junctions between aisles, ensuring that they are following the correct route to fulfil the orders they hold, while item barcodes are used to verify that the correct items are going into their appropriate bags. Sensors around the facility helped detect events such as totes slipping due to variations in friction between the crates and the surface of the conveyor belt in which they were travelling and variables such as mechanical faults that caused delays or diversions.

When planning a new automated warehouse, Ocado first produces a simulation of how the swarm of robots that pick items from storage bins will move around the space. These simulations subsequently evolve into digital twins, handling the 5,000 data points robots produce 1,000 times per second. This totals some 4TB of data per day per swarms of 3,500 robots in one warehouse. The data is handled by machine-learning systems that perform predictive maintenance, while a digital twin optimises the behaviour of the swarm and updates the parameters of the control system that runs the robotic hive.

Optimising the growing conditions of underground crops

Digital twins are having an effect even below ground. In Clapham, south London, an unusual business occupies tunnels 33 metres underground, originally built as deep-level shelters to protect residents from bombs during the Second World War. Hydroponics farm Growing Underground has filled the complex with banks of shelving holding systems to grow salad leaves and ‘micro-greens’ – intensely-flavoured, quickly-grown herbs – for London restaurants and outlets including Waitrose and Marks & Spencer. Growing food in the centre of the city greatly reduces the ‘food miles’ of the products: the farm can cut and package produce and deliver it to New Covent Garden Market within four hours. While the farm was under construction, engineers from the University of Cambridge were installing sensors to construct a digital twin.

In an underground farm, nothing is natural. Instead of sunlight, the plants are supplied with artificial light from LEDs, emitting wavelengths selected to optimise photosynthesis. The flow of water and nutrients into their growing medium is controlled, as is the temperature and humidity of the environment. All these factors influence the yield of the crops being grown in the tunnels; cameras and load cells measuring the weight of the plants provide an indication of how much vegetation is growing.

Growing food in the centre of the city greatly reduces the ‘food miles’ of the products: the farm can cut and package produce and deliver it to New Covent Garden Market within four hours

This made the operation an ideal candidate for digital twin technology, particularly to optimise its energy usage. Lighting, heating and air circulation all rely on electricity, and although the farm produces 12 times more per unit area than the conventional greenhouse and uses 70% less water, it also uses four times more energy per unit area. The farm occupies approximately the same area as a tennis court, but by 2022 is expected to produce over 60 tonnes of produce per year. Its electricity is generated by renewable sources, but to be sustainable it needs to ensure that energy use is minimised while crop growth is maximised. “From day one, the Growing Underground founders, Richard Ballard and Steve Dring bet on data to help them, and we’ve assisted them from the start of their data journey,” explains Melanie Jans-Singh, a PhD student studying energy models and digital twins for urban integrated agriculture, who installed the sensor network in the tunnels.

The network consists of 25 sensors measuring 89 variables, transmitting to eight Raspberry Pi data loggers housed in the tunnels, which send their data to a server in Cambridge and on to an online horticultural data platform. “What the digital twin shows is better than being in the tunnels in person,” says Jans-Singh. “It can monitor, learn, feedback, and forecast information that will improve working.”

South London-based Growing Underground can harvest, pack and deliver produce to central London all within four hours. The use of digital twins at the facility keeps operations running smoothly © Growing Underground/Paul Marc Mitchell (left image)/Martin Cervanansky (right image)

A digital twin helped the farm understand how the tunnel environment was affected by external weather, in particular hot days. This affected the lighting and the fans used to extract and circulate air. Subsequently, integrating forecasting models into the digital twin allowed the system to suggest operational changes for the day ahead and then tell operators how successful these were. “The farm manager checks the dashboard and sensor data at the beginning and end of the workday, so we’ve set the system to provide a forecast at 4am ready for the first workers arriving at 6am to help with decision-making for the day, and another at 4pm to warn about possible conditions that will happen overnight just before they leave,” explains Jans-Singh. “This might mean reducing ventilation if the farm is like to be too cold, temporarily adding a heater in a specific location, or trialling different light settings.” The twin’s users can look at measurements of environmental variables in specific areas, to help them work out why plants might be growing differently in one place to another.

It isn’t just yield that the twin helps to optimise. The team is also trying to tweak growing conditions to increase the sugars and starches in the crops, to ensure good flavour and nutritional value.

The Growing Underground team is developing a second site, a disused warehouse that will be able to supply a larger area of London. Here, the live twin feeds into a simulation of this new farm, which the Cambridge researchers are using to help specify equipment and design the layout to keep the warehouse environment stable and minimise energy usage. Jans-Singh is currently working on incorporating machine vision into the farm system so that the cameras monitoring the plants can provide more information on growth levels and colour of the crops.

Digital twins in cardiac medicine

💓 How patients could benefit from the use of a digital twin

A complex version of the digital twin is being investigated for use in improving cardiac medicine. In a paper published in March 2020 in the European Heart Journal, a team led by Pablo Lamata of King’s College London’s Department of Biomedical Engineering, explains how a digital twin could be used to help patients. They combined data from electrocardiograms with mechanistic models, which are based on the electrical activity inside and in between cells in the cardiac muscle and provide a mathematical model to define the heart’s electrical activity, with statistical techniques to both improve diagnosis and guide treatments for heart conditions. For example, one metric based on simulations of heart activity and circulation can differentiate patterns of muscle activity, and thereby predict the response to cardiac resynchronisation using a pacemaker.

The emerging use of digital twins in healthcare and beyond

The success of digital twins in industry is now beginning to spark interest in whether digital twins could be created for humans. These could be used in healthcare – for example, connecting the sensors on a patient undergoing surgery to a digital twin could help anaesthetists ensure that patients remain in the healthiest condition possible, while intensive care units are also an obvious target. In a less obviously benign situation, data collected by online activity and wearables might be used to construct digital twins of consumer behaviour that could be used to inform strategy for retailers, insurers and other commercial operations.

As a virtual representation of an object or system that spans its whole life, updates using real-time data, and uses simulation, machine learning and reasoning to help decision-making, digital twins give engineers the ability to see how physical assets are working, now and in the future. One of the many examples of this is how a digital twin was used to help the Mayflower autonomous ship cross the Atlantic Ocean. Therefore, it’s no surprise that they are already helping engineering businesses stay ahead of the game.

***

This article has been adapted from "Creating a virtual replica", which originally appeared in the print edition of Ingenia 87 (June 2021).

Contributors

Stuart Nathan

Author

Mark Girolami FRSE is the Sir Kirby Laing Professor of Civil Engineering at the University of Cambridge. As of October 2021, Professor Mark Girolami is the Chief Scientist of The Alan Turing Institute. Before, he was Chair of Statistics in the Department of Mathematics at Imperial College London. In 2018, he was appointed Lloyd’s Register Foundation/Royal Academy of Engineering Research Chair. As previous Programme Director for Data Centric Engineering at the Alan Turing Institute, he led a team of more than 150 researchers, working on projects with Network Rail, Rolls-Royce, the National Air Traffic Service, and Transport for London, among many others.

Professor Lord Robert Mair CBE FREng FRS is a geotechnical engineer, Emeritus Sir Kirby Laing Professor of Civil Engineering at the University of Cambridge and a Past President of the Institution of Civil Engineers. He was appointed an independent crossbencher in the House of Lords in 2015 and until recently was a member of its Select Committee on Science and Technology.

Melanie Jans-Singh was a PhD student at the University of Cambridge studying energy models and digital twins for urban integrated agriculture. She now works as the Lead Technical Energy Advisor for the Department for Energy Security and Net Zero.

Keep up-to-date with Ingenia for free

SubscribeRelated content

Technology & robotics

When will cars drive themselves?

There are many claims made about the progress of autonomous vehicles and their imminent arrival on UK roads. What progress has been made and how have measures that have already been implemented increased automation?

Autonomous systems

The Royal Academy of Engineering hosted an event on Innovation in Autonomous Systems, focusing on the potential of autonomous systems to transform industry and business and the evolving relationship between people and technology.

Hydroacoustics

Useful for scientists, search and rescue operations and military forces, the size, range and orientation of an object underneath the surface of the sea can be determined by active and passive sonar devices. Find out how they are used to generate information about underwater objects.

Instilling robots with lifelong learning

In the basement of an ageing red-brick Oxford college, a team of engineers is changing the shape of robot autonomy. Professor Paul Newman FREng explained to Michael Kenward how he came to lead the Oxford Mobile Robotics Group and why the time is right for a revolution in autonomous technologies.

Other content from Ingenia

Quick read

- Environment & sustainability

- Opinion

A young engineer’s perspective on the good, the bad and the ugly of COP27

- Environment & sustainability

- Issue 95

How do we pay for net zero technologies?

Quick read

- Transport

- Mechanical

- How I got here

Electrifying trains and STEMAZING outreach

- Civil & structural

- Environment & sustainability

- Issue 95